Why the SOC Analyst Experience Should Drive Your AI Automation Decision

Picture this: your security engineering team spends weeks evaluating AI automation platforms. They run proof-of-concepts, stress-test integrations, and debate which tool makes building playbooks the most elegant experience. They pick a winner. Deployment goes well. And then, six months later, analyst satisfaction scores are flat, MTTR hasn’t moved, and the platform feels like it was designed for someone else.

Because it was.

This is one of the most common and costly mistakes organizations make when selecting an AI automation platform. Security engineering runs the evaluation and builds the automations, but SOC analysts own the day-to-day experience.

The Evaluation Blind Spot

It makes sense for security engineers to lead platform evaluations since they configure integrations, write playbooks, and architect automation workflows, so they should have a prominent seat at the table.

But here’s the reality: once those playbooks are built and live, the engineering team steps back. The analysts step in. They triage alerts, investigate incidents, and make decisions under pressure every day. They are the primary users of the platform, every single day.

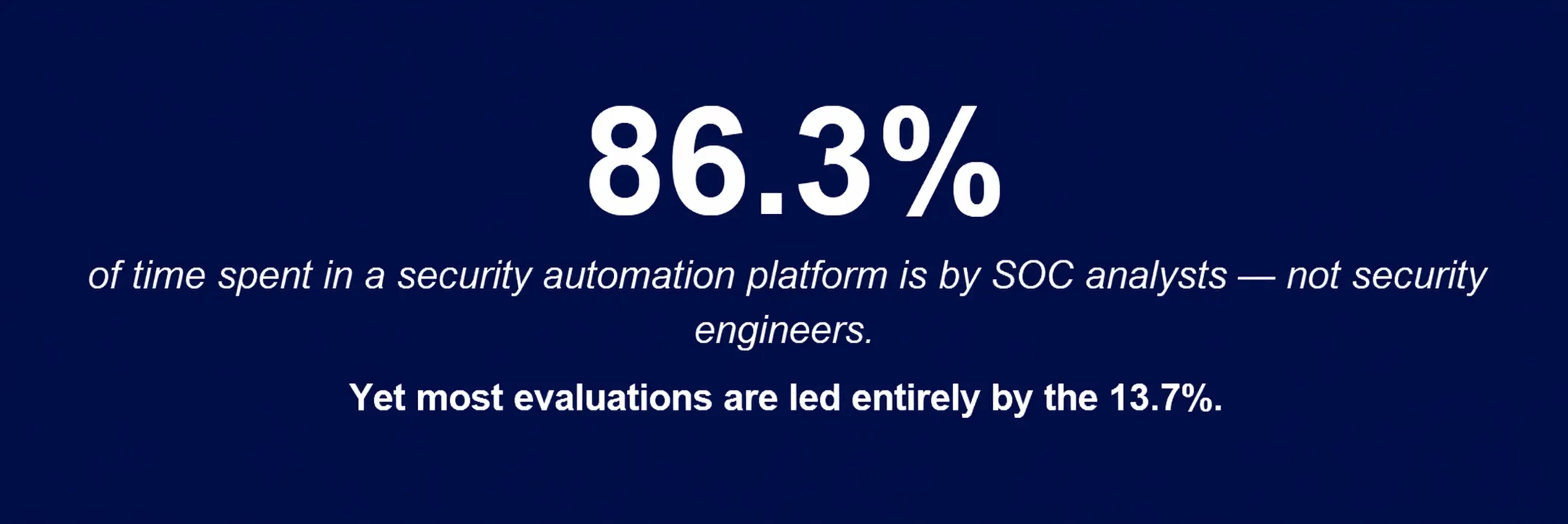

Swimlane’s research consistently shows that less than 15% of time spent in a security automation platform involves orchestration and playbook-building tasks. The remaining 85% is analysts working cases, reviewing data, collaborating with teammates, and driving incidents to resolution.

When you optimize your platform selection for the 15%, you’re making the other 85% harder than it needs to be.

Platforms often split automation and case management into separate systems. Analysts move between tools. Context gets scattered.

“Just Use a Third-Party Case Management Tool”

Some vendors in the security automation space have actually suggested this as a solution: if the native analyst experience isn’t great, point your team to a third-party case management tool and bolt it on.

This advice misses the whole point.

Case management isn’t a nice-to-have add-on. It’s the operational heart of the SOC. It’s where context lives, where collaboration happens, where institutional knowledge is captured, and where leadership gets visibility into what’s going on. When that’s siloed into a separate tool — disconnected from your automation, your data, and your workflows — you don’t solve the problem. You paper over it.

Worse, you create friction. Analysts are now context-switching between systems, re-entering data, losing the thread between automated enrichment and human investigation. That friction costs time. Time costs money. And in security, time creates risk.

What Analyst Experience Means

Analyst experience directly affects speed, consistency, and the value the SOC shows to leadership.

When we talk about analyst experience, we’re not talking about a clean UI or a dark mode. We’re talking about whether the platform helps analysts move cases forward with less friction, better judgment, and more consistency. That means asking whether your platform delivers:

- Integrated case management that lives inside the same platform where automation runs — not across a data handoff to another tool.

- A Common Data Model that normalizes data across every integration, so analysts see consistent, structured information rather than raw, noisy output from dozens of tools.

- Purpose-built analyst applications with custom fields, tailored UIs, and workflows designed around how analysts actually think and triage — not around what was easiest for engineers to build.

- Dashboards and reporting that give analysts real-time visibility into case status and give leaders the metrics they need to demonstrate SOC value.

- AI-driven decision support that surfaces recommendations, automates the repetitive, and augments analyst judgment. AI in the SOC shouldn’t route around your analysts — it should make them faster and sharper. The best implementations surface enrichment, recommended dispositions, and a response plan built around the tools already in your stack — before the analyst ever touches a case. They make the call. That’s how you reduce MTTR and retain talent.

The Business Outcomes That Matter

Every CISO and SOC Director has a version of the same conversation with leadership: “What is the SOC doing, and is it working?” The business outcomes they’re held to aren’t abstract. They’re concrete:

- Risk reduction and mission assurance

- Operational efficiency and scale

- Analyst experience and knowledge retention

- Compliance, cost, and growth enablement

None of these outcomes are achievable if your analysts are frustrated, slow, or working around the platform rather than with it. Analyst experience is an operational efficiency lever. Analyst retention is a risk lever. Knowledge captured in integrated case management is institutional resilience. These aren’t soft benefits. They’re measurable, boardroom-level outcomes.

A platform that makes your engineers happy but your analysts miserable will fail to deliver on every single one of them.

A Better Evaluation Framework

The fix isn’t to sideline your security engineers in the evaluation — it’s to bring the whole team in. Here’s a simple reframe:

| Evaluation Area | Engineer POV | Analyst POV (Don’t Skip This) |

| Playbook Builder | Ease of building automations, low-code flexibility, integration depth | How are results surfaced to analysts? Is enrichment readable and actionable? |

| Case Management | Can we customize fields and data structures? | Is it intuitive to triage, investigate, and document findings in one place? |

| Data Model | How normalized is ingested data across integrations? | Do I see consistent, structured data or am I interpreting raw tool output? |

| Reporting | Can we build custom dashboards for ops metrics? | Can I quickly see my caseload, priority queue, and SLA status at a glance? |

| AI Capabilities | What automation tasks does AI handle? | Does AI help ME make better decisions, or does it just reduce tickets to my queue? |

The Most Important User in Your SOC

Security engineers are indispensable. The automation they build is what makes scale possible. But in the daily rhythm of security operations, the analyst is the most important user of your platform. They are the ones detecting threats, closing cases, and protecting the organization — shift after shift.

When you choose a platform that works for them — one with integrated case management, a consistent data model, purpose-built applications, and AI that augments rather than sidelines — you don’t just improve their experience. You improve every outcome your organization cares about: faster response times, higher analyst retention, better compliance posture, and a SOC that can demonstrate its value.

The next time your team evaluates a security automation platform, ask one simple question before you begin: “Who is this really for?” Then make sure the people who spend 85% of their day in it are in the room when you decide.

See the Swimlane Difference

Swimlane is built around the analyst experience with integrated case management, a common data model, application builder, and Hero AI working together in a single platform. Want to see how it looks from the analyst’s seat?